Once AI is running across multiple underwriting teams — handling submissions, touching cedant data, pushing outputs into Guidewire — the questions change. Not whether it works. But who can access it? Are its outputs consistent? What do you show a regulator when they ask how a specific decision was made?

That last question is no longer hypothetical. As per National Association for Insurance Commissioners (NAIC), nearly 30 states have adopted the NAIC's AI Model Bulletin, which sets expectations for how insurers govern the use of AI and advises what regulators may request during an examination. AI itself won’t answer those questions, but what’s built around it will.

Without governance infrastructure, a regulatory inquiry into an AI-assisted decision can take two weeks to reconstruct from screenshots and email threads. A workflow inconsistency that produces different outputs for the same risk class doesn't surface until a broker notices or an auditor does.

The teams that get AI into permanent production treat governance as infrastructure from the start. What they get on the other side is an AI program that leadership trusts, that compliance can sign off on, and that practitioners actually use.

Know which workflows are running, and which ones stalled

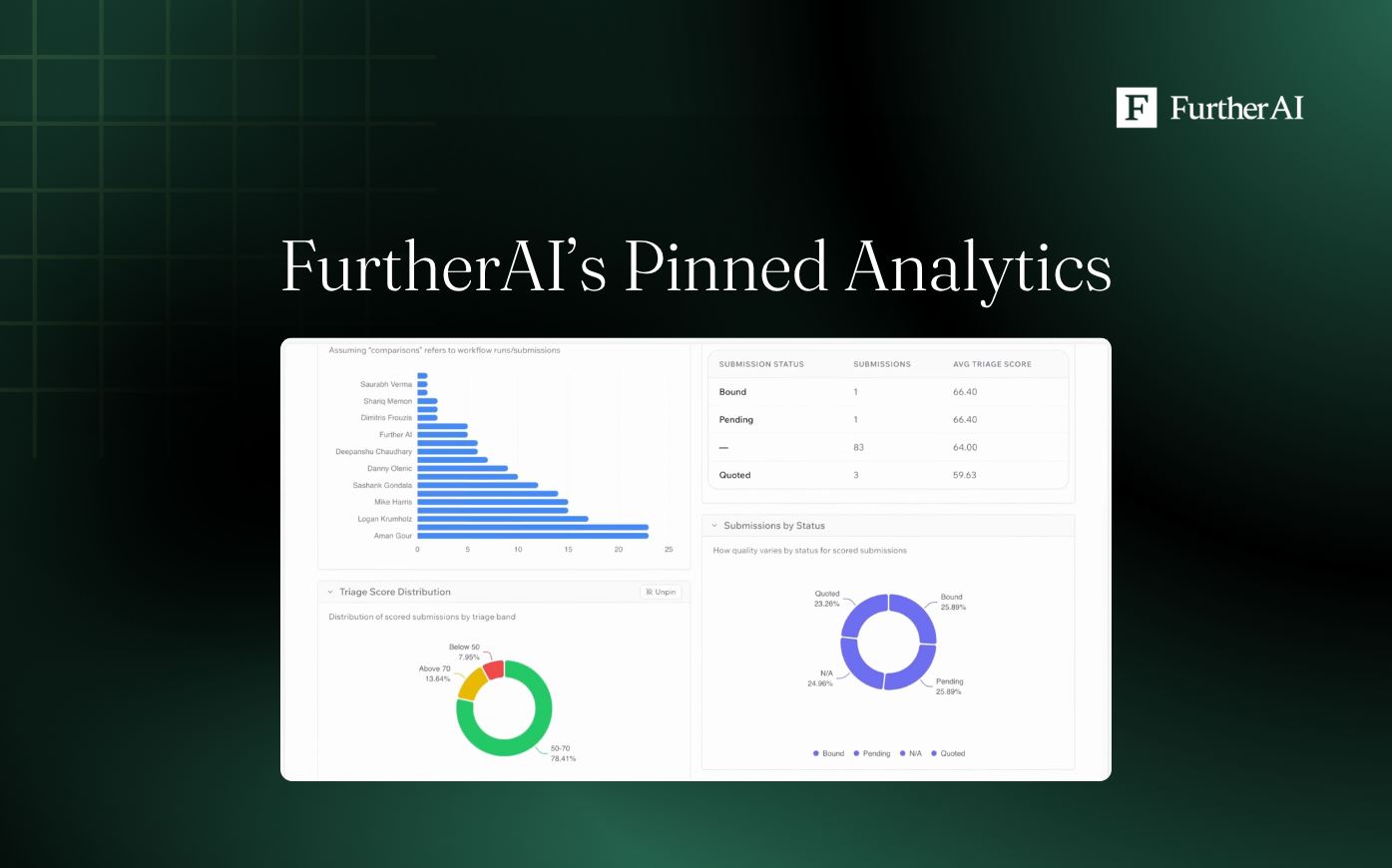

Insurance workflows run across multiple lines of business, handled by teams in different offices with different risk classes and appetites. Usage data has to surface patterns not only by headcount activity, but at a much deeper level of granularity: by workflow type, by line of business, and by team.

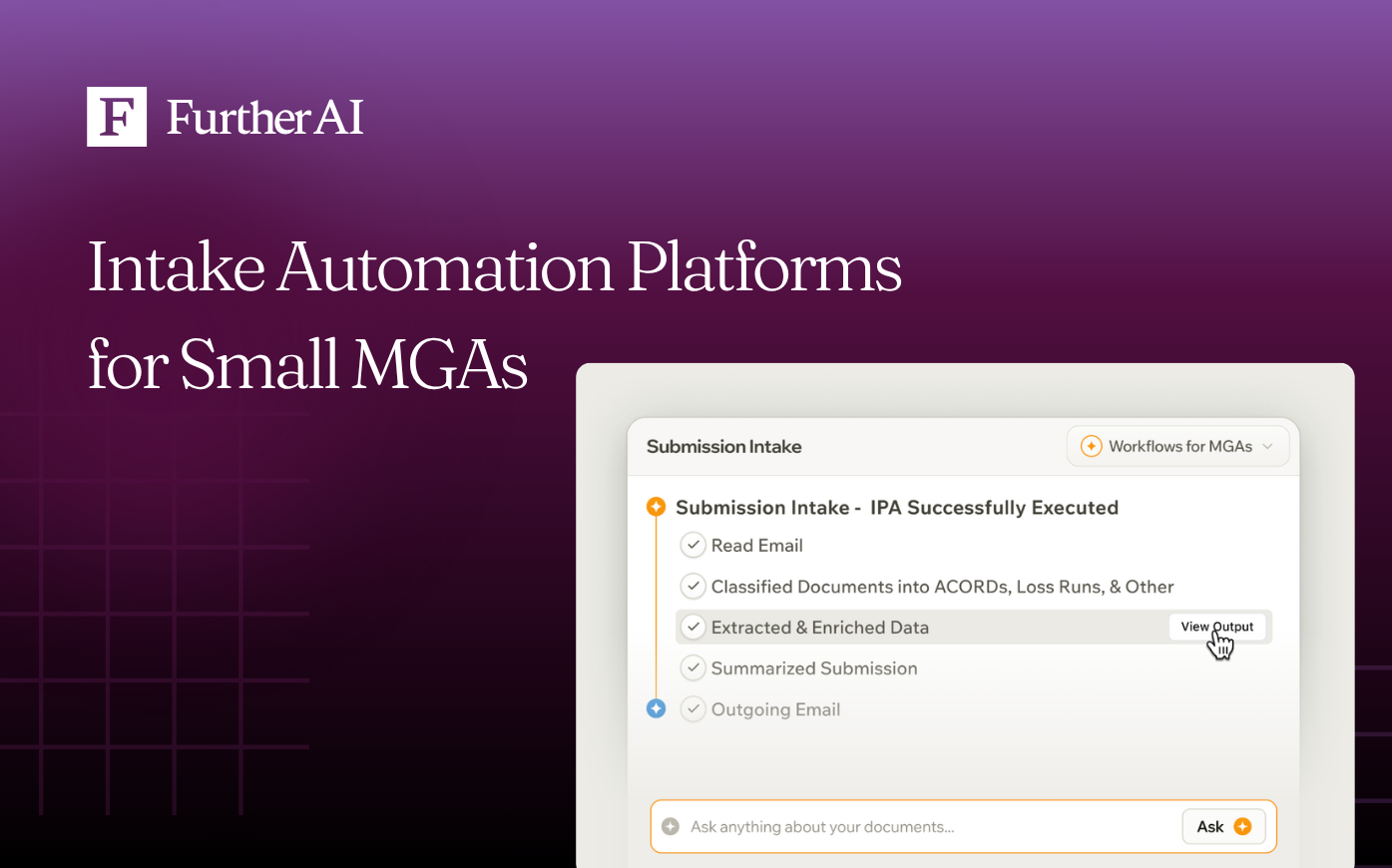

FurtherAI's admin dashboard gives ops leaders a real-time view of adoption across every deployed workflow: submission intake, claims intake, policy audit, underwriting audit, SOV, and Loss Run validation. Workflow completion rates show the difference between a tool that's being opened versus a workflow that's actually running end-to-end.

As a result, an ops leader can see that renewal review is at full adoption in commercial property but barely used in excess and surplus (E&S) lines. And this is what helps them make an informed, targeted decision: they can initiate a workflow enhancement or run a training session with the team. Without specificity, an ops leader wouldn’t know where the deployment is working and where it isn’t, which often results in missing the actual friction.

Give leadership a number, not a demo

At some point in every AI deployment, leadership asks what is actually being produced. They’re not interested in a walkthrough of the platform or even a projection of future value. They want a quantifiable ROI.

The problem is, generic platform analytics don't answer that question in insurance. Standard metrics like active user counts and session duration tell an ops leader nothing about underwriting performance. The metrics that matter are tied to outcomes: turnaround time from submission receipt to underwriter review, straight-through processing rate, extraction accuracy on ACORD forms and loss runs, and underwriter review time per account.

FurtherAI's reporting surfaces these metrics mapped directly to production workflows. Ninety days into a commercial property deployment, an ops leader can pull a report showing a 40% reduction in submission prep time, and this is the kind of number you take to a COO, not a screen recording of the AI running.

Control how AI is used and what leaves the platform

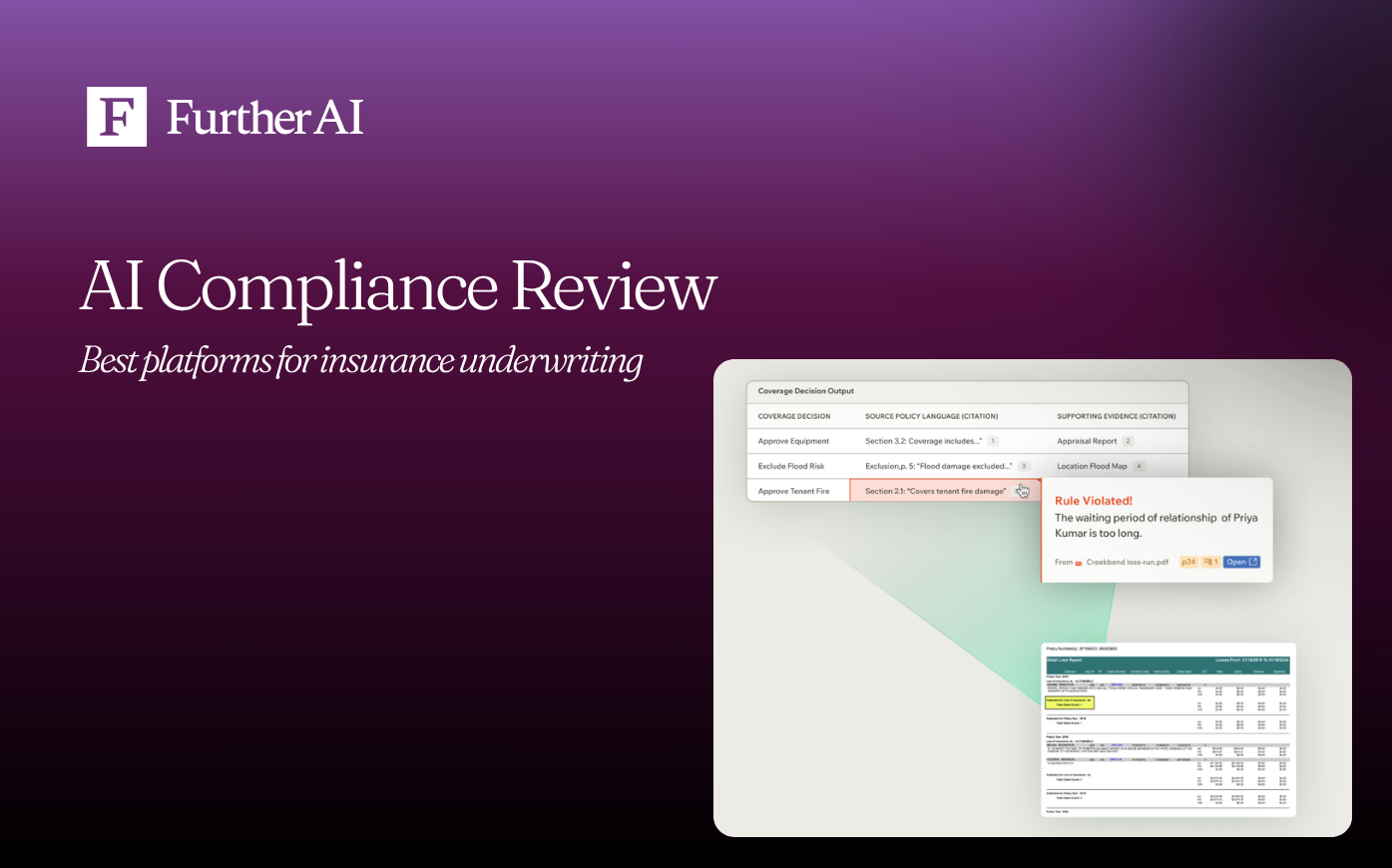

As deployment expands beyond a pilot team, access control becomes a real operational question. Submissions contain PII and proprietary risk details, reinsurance workflows involve sharing cedant data with external counterparties, and MGAs operating under delegated authority need controls that match the terms of their binding agreements.

A platform-wide permission structure that treats every user the same doesn't hold up here.

FurtherAI's admin controls let ops leaders configure access and data handling at the workflow level:

- Workflow-level access controls define which teams run which workflows. A commercial property team runs submission intake and SOV validation. A compliance team runs audit prep. Neither sees the other's queue, and neither can trigger workflows outside their configured workspace.

- Output sharing controls govern what data leaves the platform and where it goes. They decide what gets pushed to Guidewire or Salesforce, what stays within the platform, and what requires human intervention before it moves.

- Decision trail logging captures every AI action and human-in-the-loop decision automatically. When a Department of Insurance or regulatory body audits a specific submission or policy, the record is already organized, timestamped, and tied to the specific workflow execution. No reconstruction required.

The governance layer isn't a reporting module you check after the fact. With FurtherAI, it runs inside every workflow, which means the trail is always there, and not dependent on someone remembering to log it.

If you're currently deploying FurtherAI and want to walk through the admin controls, reach out to your account team. But if you want to see this in practice before you deploy, let's talk.

Ready to Go Further &

Transform Your Insurance Ops?

Reclaim your time for strategic work and let our AI Assistant handle the busywork. Schedule a demo to see how you can achieve more, faster.