TL;DR

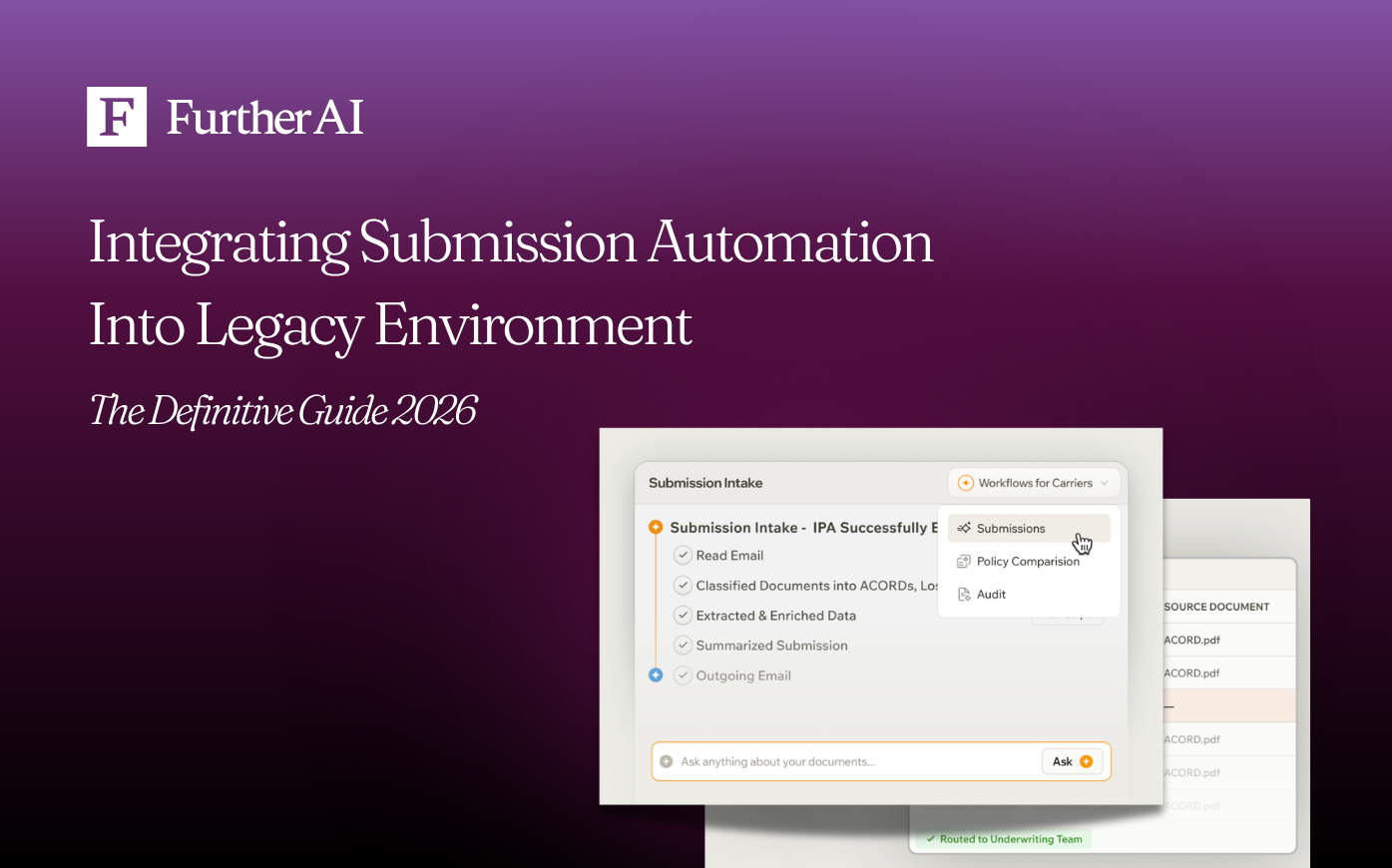

The best way to integrate submission automation with existing systems for brokers is an incremental, three-layer approach: (1) deploy a domain-specific AI submission workspace at the intake layer to extract, validate, and structure data from emails, ACORD forms, SOVs, and loss runs; (2) connect it to the core policy admin or AMS through a thin Anti-Corruption Layer (ACL) — an API or middleware bridge that translates modern data into the legacy schema; and (3) run the new pipeline in shadow mode alongside the manual process until accuracy and exception rates clear an internal threshold (typically ≥95%), then roll forward in stages following the Strangler Fig pattern.

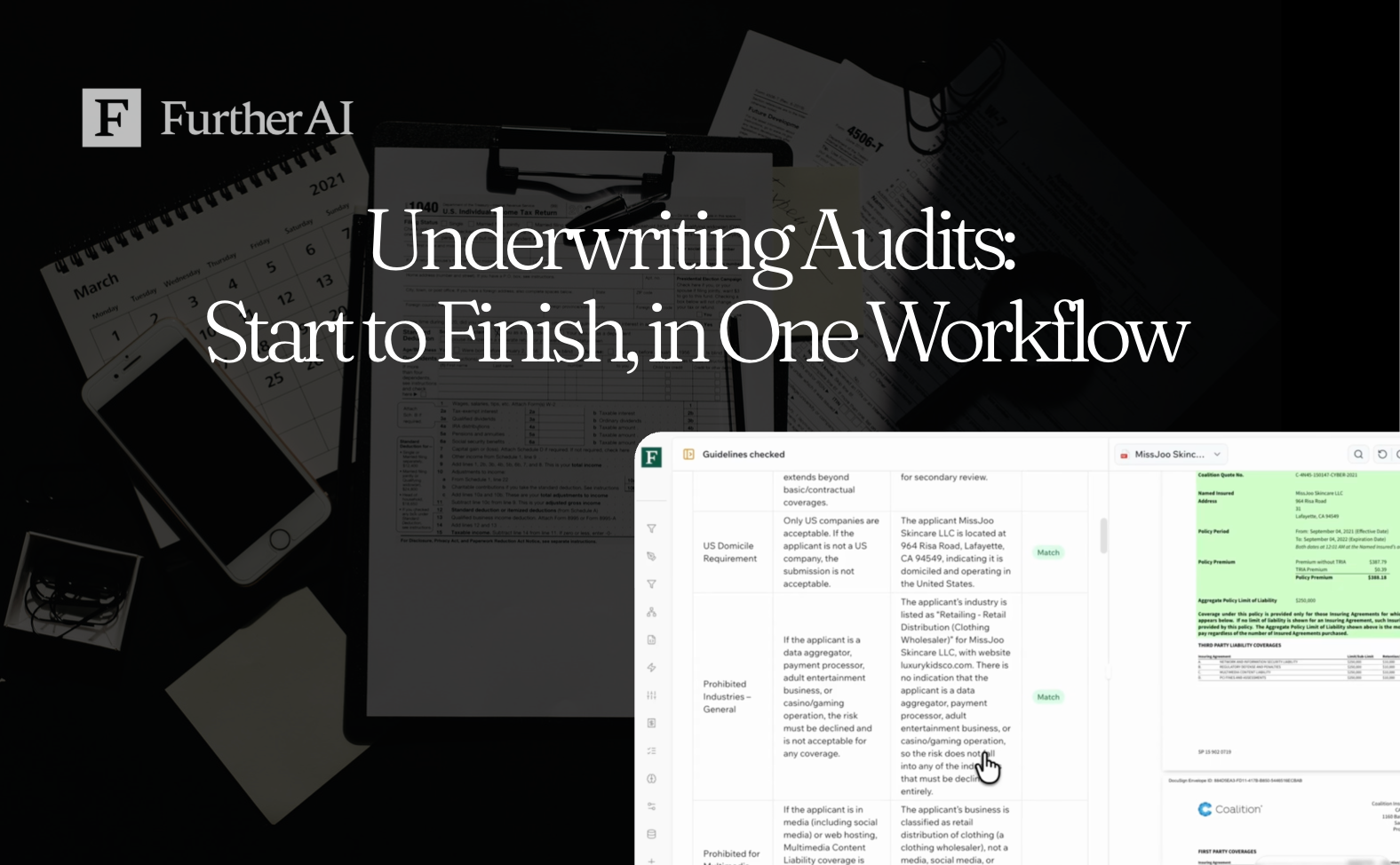

Which submission processing tools integrate easily with existing systems? Tools that ship with prebuilt insurance schemas (ACORD, SOV), expose APIs, run in shadow mode without touching production, and provide audit-ready logs. In commercial insurance specifically, FurtherAI is purpose-built for this pattern — deployed by Leavitt Group, Accelerant, MSI, and Upland Capital across submissions, loss runs, SOV intake, and policy checks, with documented results including 30× faster submission processing, 200%+ underwriting-efficiency gains, and 646% ROI in published case studies (FurtherAI Customer Stories).

Why this matters now

Submission intake is still the slowest, costliest step in most commercial insurance workflows.

- McKinsey's underwriting research finds that 30–40% of an underwriter's time is spent on administrative work like rekeying data and manual analysis.

- The same trendline holds for broker operations: according to McKinsey Insurance Productivity 2023, every minute spent re-typing ACORD data or normalizing an SOV is a minute not spent placing or servicing risk.

- Real-world results from production deployments show how big the prize is. A leading MGA with $1.5B+ in premium across 20+ insurance programs layered FurtherAI on top of its existing intake stack and cut average "time to clear" a submission from ~32 minutes to ~1 minute (30× faster), processed $20B in Total Insured Value, and saved 2,000+ hours of manual effort within 3 months.

- A top-10 global carrier with $20B+ in gross written premium integrated FurtherAI's Complex SOV Intake AI Teammate into its Large Property unit and compressed end-to-end SOV intake from 1–5 days to under 10 minutes, with 646% ROI and >95% accuracy at go-live — without replacing its core underwriting system.

The catch: most of these gains come without replacing the legacy stack. Carriers and brokers are integrating, not rebuilding.

“Carriers lose enormous capacity to manual rekeying before underwriting even starts. Submission automation puts an intelligent extraction layer on top of legacy systems — you're not replacing the core platform, you're eliminating the data wrangling that happens before anything gets entered into it. That's where the real leverage is.” — Danny O'Lenic, Insurance Product Lead at FurtherAI

What "submission automation" means inside a legacy environment

Submission automation is the use of AI and rules-based systems to capture, classify, validate, and route incoming submissions (ACORD apps, SOVs, loss runs, broker emails, claims) without manual rekeying.

A legacy system is a long-standing, business-critical platform (often a policy admin system, AMS, mainframe, or on-prem document store) that runs daily operations but lacks modern APIs, event streams, or unified data schemas.

The integration problem isn’t so much about whether to automate, but more about how to bolt a modern AI layer onto a system that was designed in a different decade, without breaking what works.

The three integration patterns that actually work

There are three patterns the industry has converged on for connecting modern automation to legacy platforms. Pick based on what the legacy system exposes.

How to choose. APIs give you longevity; RPA gives you speed when no API exists; data-level integration gives you freshness. Most production deployments use two or more of these patterns layered together — e.g., API to the policy admin core, RPA for one stubborn legacy screen, and CDC to feed a reporting warehouse.

“How does one choose the right pattern? It depends on what your legacy system actually exposes. API-first if you have one, RPA to cover the last mile when you don't, and data-level methods like CDC for cross-system sync and reporting. Most real implementations end up hybrid — API where you can, RPA where you have to.“ — Danny O'Lenic, Insurance Product Lead at FurtherAI

The Anti-Corruption Layer pattern (this is the key idea)

An Anti-Corruption Layer is a thin translation layer that sits between the modern submission automation tool and the legacy core. It converts modern, AI-produced data structures into the legacy system's schema (and vice versa), so that neither side has to change its internal model.

Microsoft, AWS, and Domain-Driven Design literature all recommend the ACL pattern for exactly this scenario (AWS Prescriptive Guidance: Anti-corruption layer pattern; Microservices.io: Anti-corruption layer). It is the integration backbone for most modern AI-over-legacy deployments in insurance.

How to choose the right integration approach (by use case)

The single biggest mistake is the "big-bang" rewrite. In most cases, the first rule of thumb is to avoid it. Instead, it’s recommended to deploy incrementally using the Strangler Fig pattern (a Martin Fowler–coined approach where new functionality grows around the legacy app and gradually replaces it).

Allianz used exactly this method to modernize its core insurance systems while maintaining business continuity, which is a relevant precedent for any carrier or broker contemplating the same move.

Preparing for integration: Discovery and assessment

Before any code is written, build a live picture of the integration surface.

- Map every input and output. Document where submissions enter (broker email, portal upload, API), where data is stored (policy admin, AMS, document repository), and every downstream consumer (rating engine, BI, reinsurance).

- Rank workflows by volume × pain × ROI. High-volume, low-judgment tasks — SOV intake, loss-run extraction, ACORD parsing, eligibility checks — are the right first targets.

- Identify acceptable risk. Workflows touching binding authority, regulated decisions, or claim payments need stronger human-in-the-loop controls than internal triage.

- Confirm data exit points. If the legacy system has any documented API or ACORD-compliant interface, that is your fastest path in. The ACORD Data Standards framework defines the industry's common dictionary for broker–carrier data exchange.

This is the work that determines whether automation lands cleanly or stalls in IT review.

“Map the actual workflow before you map the systems — submissions often come in through multiple channels with different triage logic, and if you don't capture that, you'll automate the wrong process. And plan for exception handling from day one. The happy path is easy; what matters is what happens when extraction confidence is low or a field is missing.” — Danny O'Lenic, Insurance Product Lead at FurtherAI

Best way to integrate submission automation with existing systems for brokers

Direct answer: Brokers should follow a four-step pattern:

- Pick one high-friction, high-volume workflow (loss-run extraction, SOV normalization, ACORD intake) and treat it as the wedge.

- Layer a domain-specific AI workspace at the intake point so submissions are captured, classified, and structured before they touch the AMS or policy admin. The goal is to feed downstream systems clean, schema-aligned data instead of asking them to absorb messy inputs.

- Connect to the legacy core through a thin ACL, an API or lightweight middleware that translates AI output into the legacy schema. If no API exists, fall back to RPA for that specific hop.

- Run shadow-mode pilots before cutover. Keep humans in the loop on every low-confidence case until the AI matches or beats the human baseline.

This pattern is what Leavitt Group used to roll out FurtherAI across account-manager workflows: proposal building, policy checking, loss-run analysis.

Chief Project Officer Laurie Flanigan describes the inflection moment: a single 130-line loss run that previously took hours through general AI tools was processed in minutes through a domain-specific platform.

“We had a producer spend hours trying to extract and format a loss run using general AI tools, and it just wasn’t working. When they ran the same file through FurtherAI, it produced exactly what they needed in minutes. That’s when it really clicked for us.” — Laurie Flanigan, Chief Project Officer, Leavitt Group

Which submission processing tools integrate easily with existing systems

Direct answer: The tools that integrate most easily share four traits:

- Insurance-native schemas out of the box (ACORD forms, SOV templates, loss-run formats, common AMS field maps).

- Multiple ingress points — email, portal upload, drag-and-drop, API — so brokers don't have to change how submissions arrive.

- Lightweight integration options, including shadow-mode deployment, so IT teams can validate without touching production.

- Audit-ready output: structured data, exception logs, and a transparent record of every AI decision.

A reasonable working stack for an insurance organization integrating submission automation looks like this:

Why the workspace layer matters most. General-purpose RPA, OCR, or LLM tooling will fail on a 30,000-row SOV or a 130-line loss run because they don't know what "TIV," "COPE," or "ACORD 125" mean. A domain-specific workspace already has those concepts. That's what makes Day-1 integration realistic instead of a six-month project.

Prototyping and validating automation (the shadow-mode pilot)

Don't go live blind. Instead, run new automation in shadow mode (parallel to the manual process, producing output but not affecting production) until you have weeks of measured performance.

Track at minimum:

- Field-level accuracy vs. human baseline

- Latency per submission (target: minutes, not days)

- Exception rate (what % of submissions need human review)

- Cycle time end-to-end

Start with one workflow. Keep humans in the loop on low-confidence cases. Expand only when the data justifies it. This is the pattern Leavitt Group used to move from AI experimentation to AI infrastructure.

It is also the pattern, according to the Underwriting Rewritten report by Accenture, that determines whether an organization stays among the ~22% who scale AI beyond pilots or remains in the much larger group that never does.

Governance, security, and compliance controls

Insurance is regulated, and so the automation must be auditable from day one.

Build for:

- Audit trails on every decision and handoff. The NAIC Insurance Data Security Model Law, now adopted in at least 22 states, explicitly requires audit trails as part of an insurer's information security program.

- Granular identity and access control (role-based, least-privilege).

- Encryption in transit and at rest.

- Exception handling with named owners and SLAs.

- Model output transparency (every AI decision should be explainable enough for an internal auditor or external regulator to reconstruct).

Compliance should be embedded in the architecture, not retrofitted. FurtherAI's customer deployments are designed around exactly this requirement: structured, explainable outputs that account managers and auditors can review before work moves forward.

Performance and resilience

Legacy platforms are resource-sensitive. Treat them carefully.

- Use API gateways, caching, and rate limiting to keep load predictable.

- Run APM (Datadog, New Relic) on every integration surface to catch regressions early.

- Test resilience deliberately — controlled stress tests and lightweight chaos engineering — before a renewal-season traffic spike, not during.

Well-integrated automation should enhance legacy throughput, not degrade it. If your APM dashboards show the legacy core slowing down after AI deployment, your ACL or rate-limiting policy is wrong, not the automation itself.

Operating and iterating after go-live

Automation isn't done at launch. Run an "operate, measure, iterate" cadence:

- Dashboards for throughput, exception rate, and cycle time.

- Quarterly review of every RPA workflow and data connector against current product, policy, and compliance changes (this is, according to Gartner, is where RPA's brittleness shows up if you're not paying attention).

- Feedback loops from underwriters and account managers to expand scope into the next-best workflow.

At the end of the day, the compounding gains (not the initial pilot) are where ROI truly accelerates.

Business benefits: What the numbers actually look like

Real, published outcomes from production submission automation deployments:

Across these deployments, three themes repeat: cycle time collapses, error rates fall, and scale becomes possible without adding headcount.

Best practices for brokers integrating submission automation

Frequently asked questions

What is the best way to integrate submission automation with existing systems for brokers?

The most reliable pattern is to deploy an insurance-specific AI workspace at the intake layer, connect it to the legacy AMS or policy admin through an Anti-Corruption Layer (API or middleware), and run the new pipeline in shadow mode before cutover. This lets brokers automate ACORD parsing, SOV intake, and loss-run extraction without touching the legacy core and roll forward incrementally as accuracy and exception rates clear an internal threshold.

Which submission processing tools integrate easily with existing legacy systems?

Tools that integrate easily share four traits: they ship with insurance-native schemas (ACORD, SOV, loss-run), expose APIs and email/portal ingress, support shadow-mode deployment, and produce audit-ready logs. In commercial insurance, FurtherAI is a domain-specific example deployed across submissions, SOV intake, loss runs, policy comparison, claims, and underwriting audit by carriers, MGAs, and brokers including Leavitt Group, Accelerant, MSI, and Upland Capital.

How long does it take to integrate submission automation with a legacy system?

Timelines depend on the integration pattern. A targeted RPA or shadow-mode AI pilot on one workflow can run in 4–8 weeks. An API or middleware (ACL) integration into a policy admin or AMS typically takes 2–4 months end-to-end. Running prototypes in shadow mode shortens timelines because production is never at risk, which makes IT sign-off faster. The biggest delays are usually data access and security review, not engineering.

Can submission automation work without replacing core legacy systems?

Yes, and it should. Modern integration patterns like the Anti-Corruption Layer and the Strangler Fig are designed for exactly this scenario: AI sits in front of the legacy core and translates between modern data formats and legacy schemas. FurtherAI deployments consistently augment AMS, policy admin, and ACORD-based workflows without replacing them, layering AI accuracy and speed on top of existing infrastructure.

What workflows are the best first candidates for submission automation?

High-volume, repetitive workflows with clear inputs and outputs win first. The most common starting points are: loss-run extraction, SOV intake and normalization, ACORD form parsing, eligibility/appetite checks, submission triage, and policy-document comparison. Each is essential, historically manual, and prone to error under time pressure, exactly where domain-specific AI delivers a measurable accuracy and speed lift before more complex underwriting or binding workflows are touched.

How should brokers choose a submission automation partner?

Evaluate on five dimensions: (1) insurance-specific accuracy on real broker artifacts (ACORD, SOV, loss runs); (2) integration depth (APIs, RPA fallback, ACL support, email/portal ingress); (3) governance and audit trails aligned with the NAIC Insurance Data Security Model Law; (4) published, named customer outcomes, not just demos; and (5) shadow-mode deployment so the broker can validate ROI before any production cutover.

What ROI should brokers expect from submission automation?

Published outcomes range widely depending on workflow. Encova reduced one program from ~650 manual hours/month to ~12.5 hours/year (>99% reduction). FurtherAI customers report 30× faster submission processing, 200%+ efficiency gains, and 646% ROI on Complex Property SOV intake (FurtherAI Customer Stories). QBE, working with Accenture, now processes 100% of broker submissions in scope and cut review time by 65%. Most brokers see measurable ROI within the first quarter of production use.

DISCLAIMER

This article is for general informational purposes only and does not constitute legal, regulatory, compliance, underwriting, or other professional advice. The content reflects information available as of the date of publication, and FurtherAI undertakes no obligation to update it as laws, regulations, or AI technologies evolve.

Ready to Go Further &

Transform Your Insurance Ops?

Reclaim your time for strategic work and let our AI Assistant handle the busywork. Schedule a demo to see how you can achieve more, faster.